When Systems Attack Their Sensors

- 3 days ago

- 3 min read

A functioning system depends on feedback. Sensors exist to detect deviation, stress, and failure before damage becomes irreversible. In social systems, those sensors are people. Neighbors who complain. Whistleblowers. Logs. Reports. Repeated signals that something is not working.

When a system responds to those signals by correcting the underlying problem, it is functioning.

When it responds by discrediting, punishing, or exhausting the signal source, it is failing in a very specific and dangerous way.

This failure mode can be summarized simply: the system protects itself from information instead of from harm.

What "sensors" actually are

In technical systems, sensors are neutral instruments. In social systems, sensors are human, and that makes them vulnerable.

A neighbor documenting persistent disturbance. A citizen filing repeated complaints. A professional raising concerns through formal channels.

These are not disruptions to the system. They are the system's early warning layer.

Yet social systems often treat them as adversaries.

Why? Because human sensors do not just report data. They introduce accountability.

The cost of response versus the cost of denial

Responding to a real problem usually requires effort, coordination, or expense. It may expose institutional weakness or force difficult decisions.

Attacking the sensor is cheaper.

Discrediting the complainant. Questioning motives. Reframing persistence as obsession. Labeling discomfort as intolerance.

The system remains outwardly stable, at least temporarily. The problem continues, but it is now officially unacknowledged.

This creates a perverse incentive structure.

The clearer and more consistent the signal, the greater the pressure to silence it.

Why responsible sensors are the first to be targeted

People who document carefully, escalate methodically, and remain calm are often more threatening than those who act chaotically.

They are credible. They create records. They cannot be easily dismissed as noise.

So the system shifts focus. Not to the disturbance itself, but to the person reporting it.

Once that happens, a subtle inversion occurs.

The sensor becomes the problem.

The long-term damage

A system that attacks its sensors does not eliminate dysfunction. It guarantees blindness.

Problems escalate until they become crises, because no one intervened when intervention was still cheap. People stop reporting early warning signs, having learned that accuracy is punished more reliably than silence. Those who remain attentive absorb harm quietly or disengage entirely. And the system becomes dependent on the tolerance of its most conscientious members — the very people it has been eroding.

Outward calm is preserved at the cost of internal decay. This is not stability. It is delayed collapse in progress.

The punishment of awareness

This is where real damage begins.

People who report ongoing problems do not simply feel ignored. They are worn down.

Sleep breaks. Stress accumulates. Daily life contracts. Windows stay shut. Rooms are rearranged. Work suffers. Health degrades. People begin planning exits rather than solutions.

Some leave. Those who cannot leave adapt by absorbing harm.

The system benefits from this adaptation. Fewer complaints register as success. Silence is misread as resolution. Tolerance is mistaken for stability.

This is not accidental. It is a transfer of cost.

The moral distortion

Perhaps the most corrosive effect is ethical.

When people who raise concerns are punished, the system implicitly teaches that awareness is liability and silence is safety.

Those who are affected but quiet are rewarded. Those who speak carefully are treated as threats.

Over time, responsibility itself is reframed as troublemaking.

What this is not

This is not about perfection. No system can respond to every signal effectively.

This is about direction.

A functioning system may struggle, but it still treats signals as inputs. A failing system treats signals as attacks.

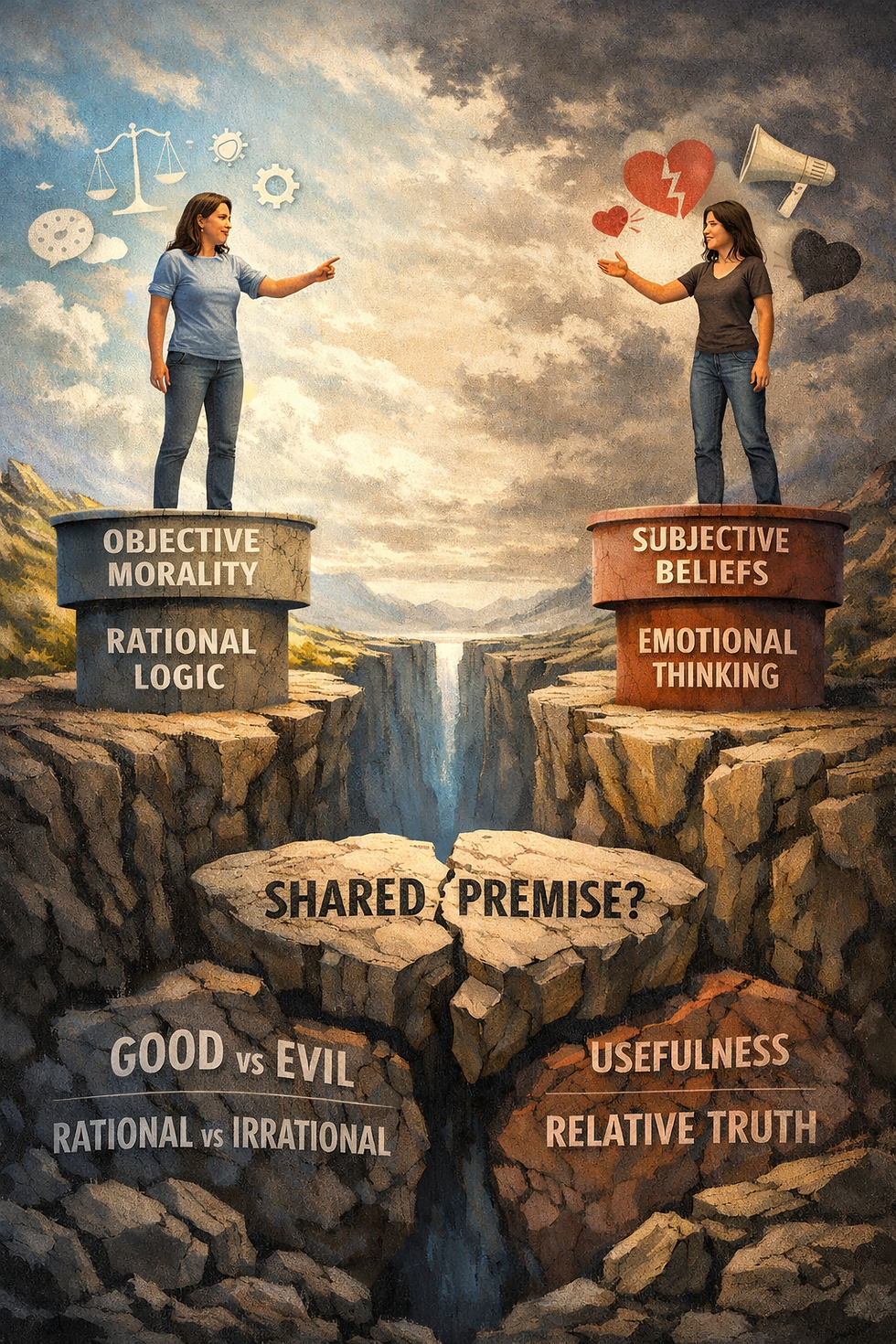

The difference is not competence. It is orientation toward truth.

A necessary boundary

Recognizing this failure mode does not obligate individuals to keep sacrificing themselves to expose it.

Self-protection is not complicity.

When a system consistently attacks its sensors, the rational response may be distance, documentation, or quiet containment rather than continued engagement.

The indictment already exists. It is written into the system's behavior.

Conclusion

A system that attacks its sensors is not merely inefficient. It is structurally hostile to reality.

Such systems do not fail because they lack information. They fail because they reject it. And when the collapse finally arrives — as it must, because reality is not optional — it will not look like a sudden rupture. It will look like the inevitable end of a long, well-maintained calm.

Comments